Cognitive design principles are essentially research-based guidelines that take into account the limitations of human cognitive architecture. In other words, if we understand the processes by which students assimilate information, we can design educational multimedia accordingly to ensure optimal efficiencies in learning. The principles seem deceptively simple and intuitive but there appears little meaningful uptake into the design of educational material, so as a practitioner and postgraduate researcher I’d like to illustrate the applicability of a few of the principles in relation to dynamic visualisations i.e. videos and animations.

The shift from textbooks to videos

There are some inherent advantages to reading from a textbook.

- Text can be read slowly (as compared to audio tracks that run at one speed).

- Formatting such as headings and paragraph structure allow the learner to easily locate and re-read sections and/or examine images at length (as compared to a video, particularly talking-head footage, where it is difficult to locate and revise a subsection)

- A textbook allows one a bird’s eye view of the subject material and thereby indicates the thematic structure of the content (whereas the video only unfolds over time).

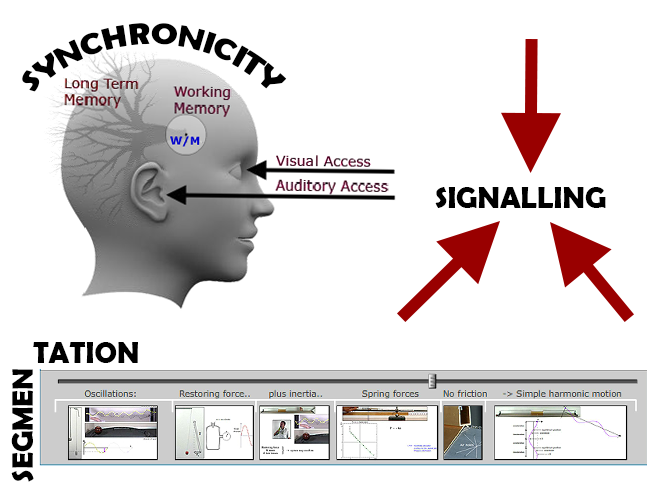

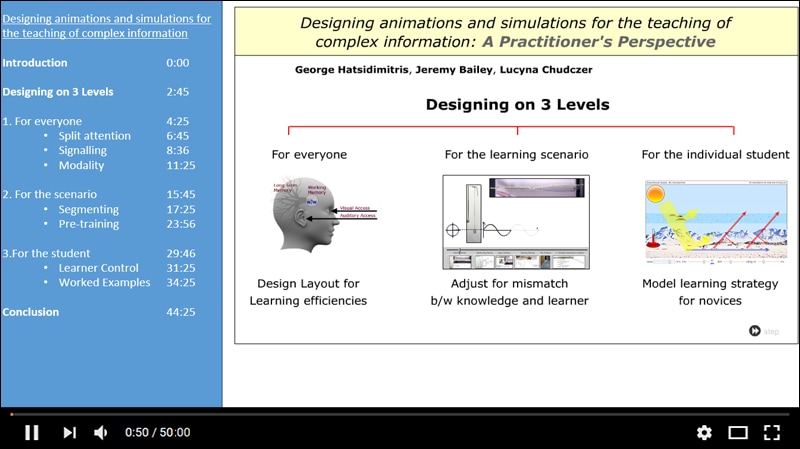

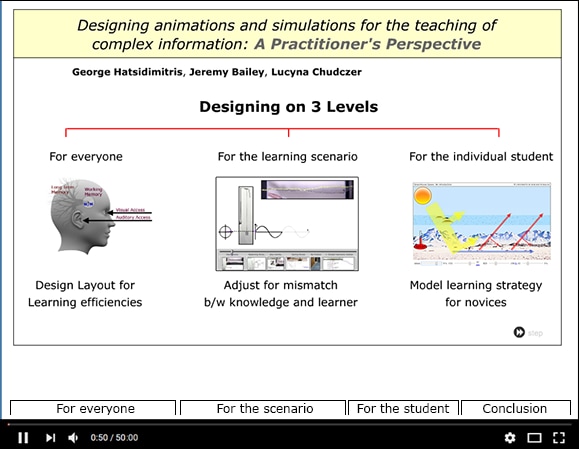

In essence the student reading a textbook can see the “segmentation” of the information into conceptually discrete sections that can be easily identified and reviewed at leisure. Video, on the other hand, is inherently transient and thus the information is fleeting. One can only see an instant at a time and thus locating the subsections of the material for review purposes can be difficult and time consuming. One strategy to overcome this shortfall is to index the video into conceptually discrete segments so as to facilitate rehearsal of subsections. In so doing, the designer can also provide the student with an overview of the underlying structure of the material.

The images below illustrate some techniques to achieve this objective.

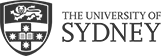

The Modality Principle: engaging dual channels in a synchronous manner.

All too often one sees videos where there is a lack of connectivity and synchronicity between the script and the visual overlays. Often this is because the presenter has written an extensive script for the filming session but provided very little in the way of associated overlays, leaving the video editors to scrounge around for stock images etc to make the video engaging and “beautiful”. A better approach would be to note down some bullet points for the script and then see what images can be sourced or created. Then one could reconsider how the script might be fleshed out in light of what imagery is available. Where appropriate, one could then refer directly to the imagery e.g. this famous photo depicts…, the graph on the left shows… etc. This strategy results in better integration between the audio and the visuals and is common practice in PowerPoint presentations but is often overlooked in the production of videos. What needs to be achieved is a synchronicity between the visual material and the audio narration. Why is it so important? Time for a touch of theory.

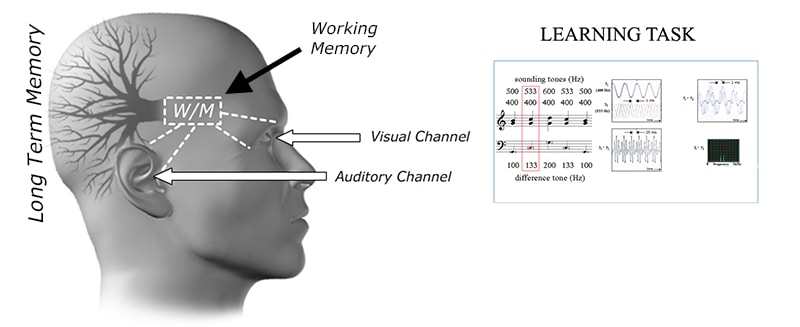

Traditional modes of acquiring information (e.g. from textbooks) only utilise one of the sensory modalities, i.e. the visual. Hence, if you have static images embedded in the text then the learner must split his/her visual attention between the images and the text. It may be necessary to “ping-pong” between the text and the images repeatedly in order to integrate the textual and visual information.

A much more efficient method of processing is for the text to be offloaded to the auditory channel as a narration so that the visual channel can be devoted solely to the visual material. As working memory is the bottleneck to information assimilation (it can only hold a few bits of new information for a short period of time unless there is an opportunity to rehearse), the material needs to be closely integrated between the channels to minimise the load on working memory. Working memory can then more efficiently process and encode the information into long term memory, where meaningful learning takes place.

The effectiveness of using dual channels is maximised when the narrative in the audio channel is carefully and explicitly synchronised to the visuals. One way to ensure that there is optimal synchronicity between the two channels is to guide the student’s visual attention by using signalling techniques.

Signalling (or visual cues)

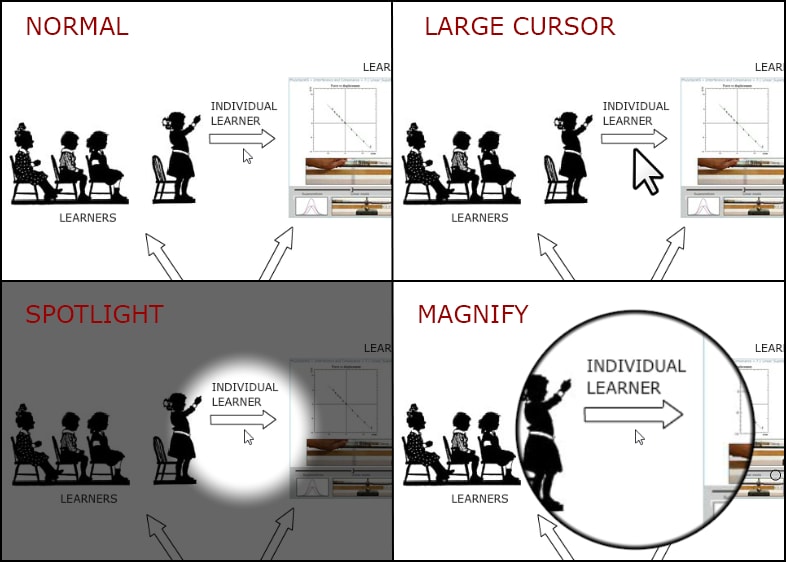

Often there are a number of interrelated visual elements on the screen at the same time, particularly when dealing with complex information such as scientific phenomena or mechanical processes. By using any number of visual cues such as arrows, highlighting, fading the background, annotations etc, the presenter can signal to the student where his/her visual attention should be directed. These cues are used optimally when in synchronicity with the narration e.g. the narrator might say “here we can see…” whilst circling a visual element on the screen.

Some techniques for incorporating signaling cues are presented below.

Visual cues could also be something as simple as showing elements on a page sequentially as they are introduced in the narration rather than having them all appear at once. If you want to achieve this effect in the editing stage, then simply grab some white (or other colour if appropriate) shapes from the Camtasia menu and conceal visual elements until the right moment. Pen casting is, of course, another useful tool for signalling purposes.

How do I get started?

A do-it-yourself video recording booth is available for all University of Sydney staff. Find out more about this booth and how to use it to start making meaningful educational videos.