How close are we to reading minds?

The technology to decode our thoughts is drawing ever closer. Neuroscientists at the University of Texas have for the first time decoded data from non-invasive brain scans and used them to reconstruct language and meaning from stories that people hear, see or even imagine.

In a new study published in Nature Neuroscience, Alexander Huth and colleagues successfully recovered the gist of language and sometimes exact phrases from functional magnetic resonance imaging (fMRI) brain recordings of three participants.

Technology that can create language from brain signals could be enormously useful for people who cannot speak due to conditions such as motor neurone disease. At the same time, it raises concerns for the future privacy of our thoughts.

Language decoded

Language decoding models, also called “speech decoders”, aim to use recordings of a person’s brain activity to discover the words they hear, imagine or say.

Until now, speech decoders have only been used with data from devices surgically implanted in the brain, which limits their usefulness. Other decoders which used non-invasive brain activity recordings have been able to decode single words or short phrases, but not continuous language.

Table: The Conversation Source: Tang et al. / Nature Neuroscience

The new research used the blood oxygen level dependent signalfrom fMRI scans, which shows changes in blood flow and oxygenation levels in different parts of the brain. By focusing on patterns of activity in brain regions and networks that process language, the researchers found their decoder could be trained to reconstruct continuous language (including some specific words and the general meaning of sentences).

Specifically, the decoder took the brain responses of three participants as they listened to stories, and generated sequences of words that were likely to have produced those brain responses. These word sequences did well at capturing the general gist of the stories, and in some cases included exact words and phrases.

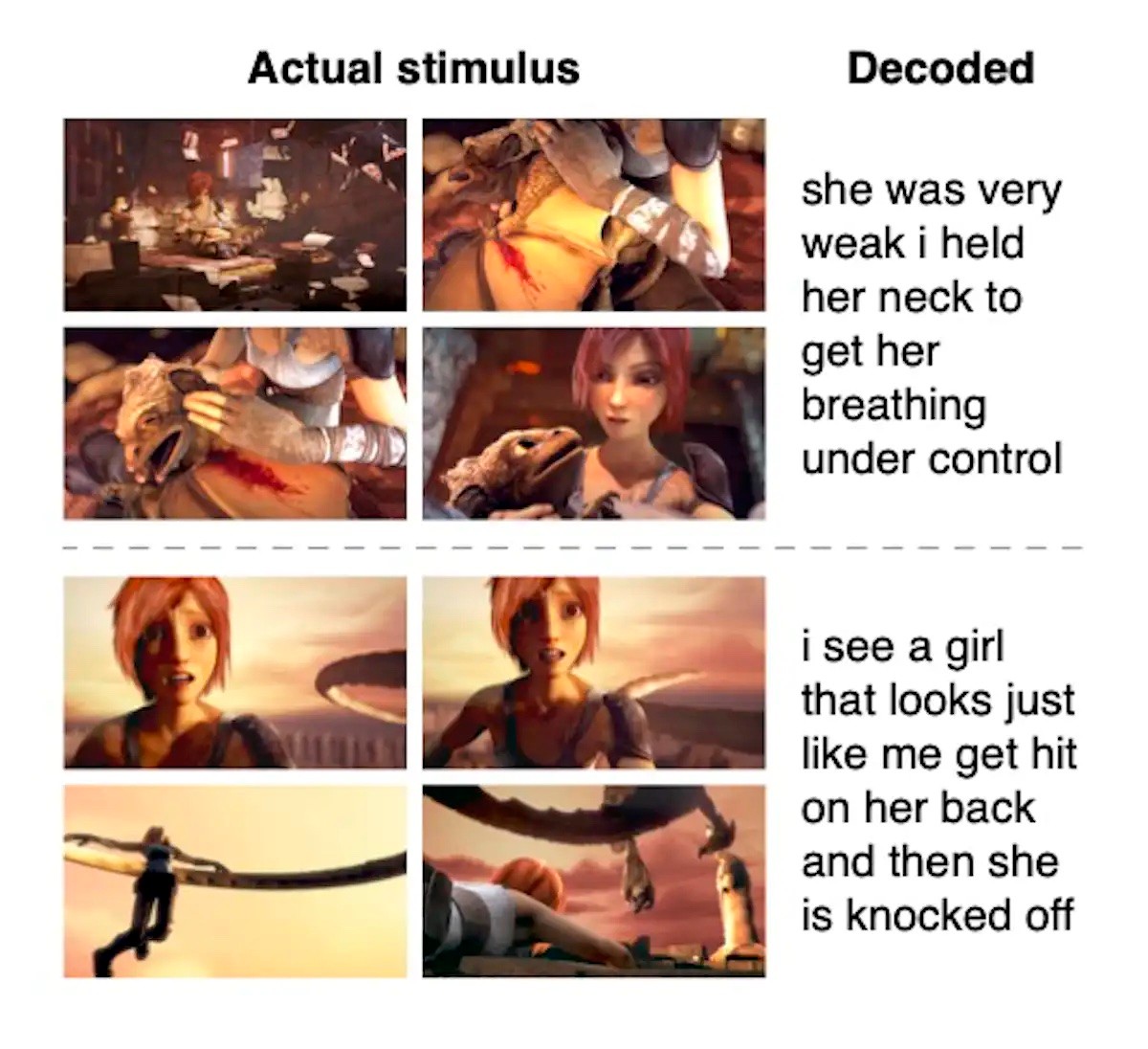

The researchers also had the participants watch silent movies and imagine stories while being scanned. In both cases, the decoder often managed to predict the gist of the stories.

For example, one user thought “I don’t have my driver’s licence yet”, and the decoder predicted “she has not even started to learn to drive yet”.

Further, when participants actively listened to one story while ignoring another story played simultaneously, the decoder could identify the meaning of the story being actively listened to.

How does it work?

The decoder could also describe the action when participants watched silent movies. Tang et al. / Nature Neuroscience

The researchers started out by having each participant lie inside an fMRI scanner and listen to 16 hours of narrated stories while their brain responses were recorded.

These brain responses were then used to train an encoder – a computational model that tries to predict how the brain will respond to words a user hears. After training, the encoder could quite accurately predict how each participant’s brain signals would respond to hearing a given string of words.

However, going in the opposite direction – from recorded brain responses to words – is trickier.

The encoder model is designed to link brain responses with “semantic features” or the broad meanings of words and sentences. To do this, the system uses the original GPT language model, which is the precursor of today’s GPT-4 model. The decoder then generates sequences of words that might have produced the observed brain responses.

The accuracy of each “guess” is then checked by using it to predict previously recorded brain activity, with the prediction then compared to the actual recorded activity.

During this resource-intensive process, multiple guesses are generated at a time, and ranked in order of accuracy. Poor guesses are discarded and good ones kept. The process continues by guessing the next word in the sequence, and so on until the most accurate sequence is determined.

Words and meanings

The study found data from multiple, specific brain regions – including the speech network, the parietal-temporal-occipital association region, and prefrontal cortex – were needed for the most accurate predictions.

One key difference between this work and earlier efforts is the data being decoded. Most decoding systems link brain data to motor features or activity recorded from brain regions involved in the last step of speech output, the movement of the mouth and tongue. This decoder works instead at the level of ideas and meanings.

One limitation of using fMRI data is its low “temporal resolution”. The blood oxygen level dependent signal rises and falls over approximately a 10-second period, during which time a person might have heard 20 or more words. As a result, this technique cannot detect individual words, but only the potential meanings of sequences of words.

No need for privacy panic (yet)

The idea of technology that can “read minds” raises concerns over mental privacy. The researchers conducted additional experiments to address some of these concerns.

These experiments showed we don’t need to worry just yet about having our thoughts decoded while we walk down the street, or indeed without our extensive cooperation.

A decoder trained on one person’s thoughts performed poorly when predicting the semantic detail from another participant’s data. What’s more, participants could disrupt the decoding by diverting their attention to a different task such as naming animals or telling a different story.

Movement in the scanner can also disrupt the decoder as fMRI is highly sensitive to motion, so participant cooperation is essential. Considering these requirements, and the need for high-powered computational resources, it is highly unlikely that someone’s thoughts could be decoded against their will at this stage.

Finally, the decoder does not currently work on data other than fMRI, which is an expensive and often impractical procedure. The group plans to test their approach on other non-invasive brain data in the future.

This article is republished from The Conversation: the world's leading publisher of research-based news and analysis. A unique collaboration between academics and journalists. It was written by: Christina Maher, University of Sydney.